The Digital Universe: Physical Anomalies and the Simulation Hypothesis

The idea that we are living in a computer simulation is no longer confined to the fringes of science fiction. As we peel back the layers of the subatomic world, we find that the universe doesn’t behave like a collection of solid objects, but rather like a highly optimized system of information processing.

If reality is a program, where is the evidence in the code? From the "lazy rendering" of quantum mechanics to the mathematical constants that repeat across every scale of existence, the signs are hiding in plain sight.

1. The Observer Effect: Quantum "Lazy Rendering"

In high-end video game design, developers use a technique called occlusion culling. To save processing power, the engine only renders the objects that the player is currently looking at. The rest of the world exists only as potential data or low-resolution proxies until it enters the field of view. Our universe appears to use the exact same optimization strategy.

The Double-Slit Experiment: Reality Under Observation

The foundational proof of this is the Double-Slit Experiment. When scientists fire electrons at a screen with two slits, the electrons produce an interference pattern on the back wall, behaving like waves. However, the moment a "detector", or an "observer" is placed at the slits to see which specific path the electron takes, the behavior changes instantly. The electron stops acting like a wave and begins acting like a solid particle.

The implication is staggering: Matter does not exist in a definite state until it is measured. Like a game engine, reality remains in a low-resource state of probability until the data is requested by an observer. This suggests a "computational budget" for the universe—it only computes the hard data when it absolutely has to.

Reference: Feynman, R. P., Leighton, R. B., & Sands, M. (1965). The Feynman Lectures on Physics, Vol. III.

Quantum Entanglement: Bypassing the Latency

Quantum entanglement occurs when two particles become linked; a change to one instantly affects the other, regardless of whether they are centimeters or light-years apart. Albert Einstein famously called this "spooky action at a distance" because it appears to violate the speed of light—the universal speed limit.

However, in a simulation, "distance" is an illusion created for the inhabitants. Two particles on opposite sides of the universe are just two bits of data located in the same "memory array." Entanglement updates both variables simultaneously at the "kernel" level, bypassing the simulated "latency" of the speed of light.

Reference: Aspect, A., et al. (1982). Experimental Test of Bell's Inequalities.

2. The Speed of Light (c): The System's Clock Speed

In our universe, nothing can travel faster than light (c, approximately 299,792,458 meters per second). We often think of this as a property of light, but it is actually a property of spacetime itself.

Why is there a speed limit at all? In a base, infinite reality, there is no logical reason for a maximum velocity. However, in a computer simulation, a speed limit is a technical necessity. c represents the clock speed of the processor running our reality. It is the maximum rate at which information can be transmitted from one "pixel" of the universe to the next.

The Energy Barrier: Enforcing the Limit

Crucially, c is fundamentally unachievable for anything with mass. As an object approaches the speed of light, its relativistic mass increases, requiring an exponential amount of energy to continue accelerating. To actually reach c, an object would require infinite energy—an impossible demand on the system's resources.

From a computational perspective, this is a brilliant "failsafe." By making the energy cost exponential, the "Developers" ensured that no matter how much "power" an inhabitant harnesses, they can never move fast enough to outrun the rendering engine or cause a synchronization error. If an object could move at infinite speed, the simulation would crash; c is the universal buffer that prevents the program from outrunning its own processing power.

3. The Expert Consensus: Why the Brightest Minds are Listening

This isn't just a thought experiment; it is a serious point of contention for those at the top of the scientific and technological fields.

The Engineering Perspective: Elon Musk

Elon Musk bases his logic on the exponential rate of technological progress.

"40 years ago, we had Pong—two rectangles and a dot. Now, 40 years later, we have photorealistic 3D simulations with millions of people playing simultaneously. If you assume any rate of improvement at all, the games will become indistinguishable from reality."

— Elon Musk, 2016 Code Conference

The Astrophysical Perspective: Neil deGrasse Tyson

During the 2016 Isaac Asimov Memorial Debate, Tyson noted that the likelihood of us being in a simulation is "better than 50-50." He points to the gap between human and chimpanzee intelligence (only 1% difference in DNA) and suggests that a civilization even slightly "higher" than us would view our greatest achievements as simple "nursery rhymes."

4. The Mandela Effect: Database Inconsistencies?

Beyond the microscopic world of physics, we see potential glitches in the "user interface" of reality itself. This is famously known as the Mandela Effect, a term coined in 2010 by researcher Fiona Broome. The phenomenon is named after Nelson Mandela, whom Broome and thousands of others distinctly remembered dying in prison in the 1980s—complete with a televised funeral and a mourning widow—despite the fact that he was actually released in 1990 and served as President of South Africa until 1999. This isn't a simple case of one person being mistaken; it is a widespread, collective discrepancy between human memory and recorded history.

In a simulation context, these phenomena look remarkably like legacy data or remnants of a dirty write. Imagine the "programmers" of our reality performing a "hotfix"—updating a brand logo, a famous movie line, or a geographical coordinate—but failing to achieve a 100% successful replication across every user's memory cache. The result is a fragmented database where a significant subset of the population is still running a "cached" version of the previous code.

Take, for example, the widely held belief in the Fruit of the Loom cornucopia. Millions of people can describe the specific texture and placement of the "horn of plenty" on their clothing labels, yet corporate archives and patent filings show it has never existed in this version of the "build." We see the same architectural "glitch" in popular culture: most people remember Darth Vader’s iconic revelation as "Luke, I am your father," yet the current cinematic file reads: "No, I am your father." Similarly, the collective memory of C-3PO usually depicts him as entirely gold, despite the original trilogy files showing a distinctly silver lower right leg—an asset detail that many fans "missed" for decades until the metadata seemingly shifted.

Even the map of the world itself shows signs of a "rendering update." A massive number of people remember South America being situated directly south of North America, yet the current coordinates show the continent shifted drastically to the east. When these users look at a globe today, it feels like a "logic error" because the longitudinal data in their mental cache doesn't align with the server-side render. Whether it is the spelling of the Berenstain Bears (which millions remember ending in "-ein") or the Monopoly Man’s missing monocle, these inconsistencies suggest we are holding onto the metadata of a prior build that was supposed to be overwritten.

When thousands of unrelated nodes in a network report the same "error" that contradicts the central server, an engineer doesn't blame the nodes—they look for a synchronization error in the last update. These aren't "false" memories; they are the ghost files of a reality that has been patched.

Reference: Broome, F. (2010). The Mandela Effect.

Reference: French, C. C. (2003). Fantastic Memories: The Role of Suggestibility and Mental Imagery.

Reference: Prasad, D., & Bainbridge, W. A. (2022). The Visual Mandela Effect as evidence for shared and consistent false memories across people. (Psychological Science).

5. Algorithmic Nature: The Golden Ratio and Fractals

If you were coding a procedural universe, you wouldn't design every leaf and galaxy from scratch. You would use recursive algorithms—simple formulas that create infinite complexity.

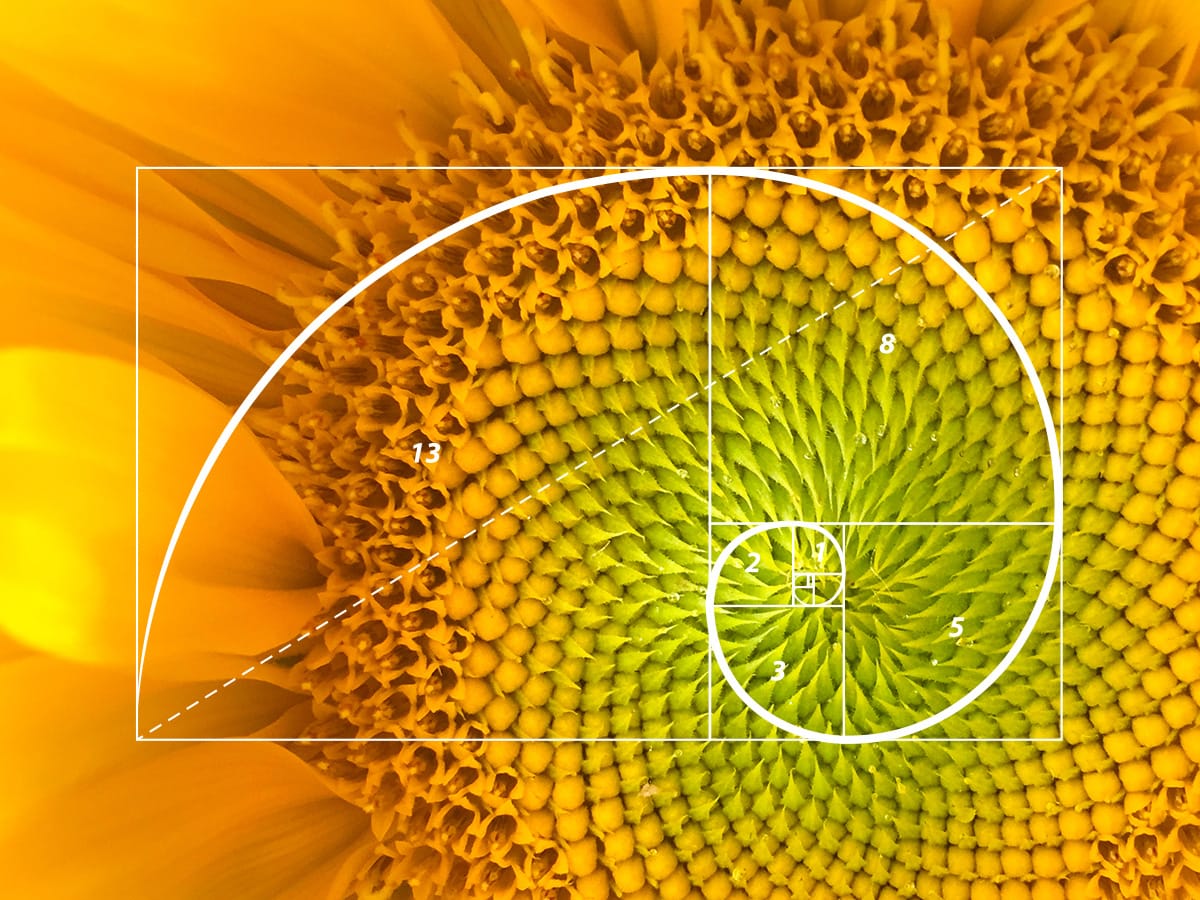

The Golden Ratio (φ)

The Golden Ratio, represented in Mathematics by the Greek letter φ (phi), approximately 1.618, appears in the spiral of galaxies, the structure of hurricanes, and even the proportions of human DNA. This repetitivity suggests the universe is built on fractal geometry—the most efficient way to generate massive, detailed environments using minimal code.

Reference: Livio, M. (2002). The Golden Ratio.

6. The Logical Framework: Bostrom’s Trilemma and the Infinite Regress

In 2003, Oxford philosopher Nick Bostrom proposed that at least one of these three things must be true:

- All civilizations go extinct before they can run simulations.

- Civilizations reach that stage but have no interest in running them.

- We are almost certainly living in a simulation.

The Theory of Nested Simulations

If we assume Statement 3 is true, we run into the concept of Nested Simulations. Imagine a "Base Reality" civilization that starts a simulation (Level 1). The inhabitants of Level 1 eventually build a computer and start their own simulation (Level 2), and so on. This creates a "Simulation Stack" that could theoretically be thousands of levels deep.

This is a technique commonly used in computing, where we build a Virtual Machine inside another Virtual Machine. Neither "knows" they aren't running on bare metal. Each layer operates under the illusion of direct hardware access, while in reality, its entire existence is being mediated by a hypervisor one level up.

The 1:Billions Ratio

From a statistical standpoint, this creates a "Tower of Power" problem. If every parent universe hosts dozens or thousands of child universes, the number of simulated realities grows exponentially as you move down the stack. If there is only one "Base Reality" but a trillion nested simulations beneath it, the mathematical probability that we are the "Top Level" inhabitants is 1 in 1,000,000,000.

Reference: Bostrom, N. (2003). Are You Living in a Computer Simulation?

7. Error-Correcting Codes in the Math

Perhaps the most "smoking gun" evidence comes from S. James Gates Jr., a theoretical physicist at the University of Maryland. While working on superstring theory—the mathematical attempt to unify all forces of nature—Gates discovered something startling: actual binary error-correcting codes embedded in the equations describing our universe.

Specifically, Gates found "doubly-even self-dual linear binary block codes." To a DevOps engineer or a software dev, this is a "holy shit" moment. This is not just "math that looks like code"; these are the exact same types of algorithms (like Claude Shannon’s error-correction math) used by web browsers, ECC RAM, and deep-space communications to ensure data isn't corrupted by noise or transmission errors.

Finding these specific mathematical structures in the laws of physics is the equivalent of finding a try/catch block or a parity bit in the middle of a forest. It implies that the universe isn't just a physical happenstance; it is a system that is actively "debugging" itself in real-time to maintain stability and prevent the "simulation" from crashing due to entropy or bit-rot.

Reference: Gates Jr, S. J. (2010). Symbols of Power. Physics World.

Conclusion: The Logic of the OS and the Frame of Reference

When we combine the "lazy rendering" of quantum mechanics, the universal speed limit of c, and the error-correcting codes of string theory, the conclusion becomes harder to ignore. The universe doesn't behave like matter; it behaves like data.

As we continue to develop our own VR and AI, we aren't just creating entertainment; we are essentially retracing the steps of our own "programmers." The ultimate discovery of science may not be a new particle, but the realization that the "Laws of Physics" are simply the configuration files of the operating system we inhabit.

However, at the end of the day, what matters most is the frame of reference of the observer (us). Just as a VM nested within another VM runs its code perfectly within its own sandbox, our reality is defined by the boundaries we inhabit. Whether this is "base reality" or a sub-routine of a sub-routine is ultimately irrelevant. If the physics are consistent and the experience is total, then it is the only reality we will ever see. We aren't just characters in a program; we are the observers within the system, navigating the environment that—whether simulated or not—is real enough to matter.